Why LLMs Are Powering the Next Generation of Software

LLMs in software development have transformed how applications are designed. Its impact is visible in design, development, testing, and improvement processes across all industries.

- February 27, 2026

- by Tarun

Introduction

Similar to the rise of cloud computing, software development is undergoing a significant change. LLMs have moved past being test tools. They are now a part of the whole software development process. With things like auto code writing, smart bug fixing, and help with system design, LLMs are changing how people work and what these systems can do.

Organisations are not thinking about whether or not to use LLMs now. They are trying to see how much these can fit into engineering work and product plans. This is because LLMs are getting better and new ways of developing software are appearing, like AI-assisted designs and software that knows its context.

This article takes a close look at why LLMs are now helping to build the next group of software. It covers where they add clear value, and the limits that engineering leaders need to keep in mind.

A Turning Point for Software Engineering

The use of LLMs now is more than just an improvement to our tools. Traditional automation in software used to rely on static processes, such as compilers and pipelines. Now LLMs in software development have introduced a new way of development. They work with guesswork and use natural language to reason.

Martin Fowler says that LLMs are a kind of nondeterministic computing. This means the answers they give can be different even when you put in the same input. (The New Stack) This changes how engineers need to think about making sure things work correctly, testing, and designing systems.

Main things that are causing people to use LLMs more:

- There are many code-trained models of good quality available.

- Need to release software faster.

- Modern systems are getting more complicated.

- Not enough experienced engineers.

- Pressure to have AI in products.

All these combined have made it necessary to use LLMs in software development instead of keeping it as an optional assistant.

How LLMs Change Software Development

The most significant sign of this change is the influence of LLMs in software development life cycle.

Requirements and Ideas

LLMs are quite good at translating normal language into structured data. More and more teams are employing them for:

- Writing user stories

- Creating acceptance requirements

- Looking into unusual situations

- Making technical documents

This makes it easier for product, design, and engineering teams to work together.

Code Generation

The most clear effect is in code writing. Modern LLMs that have been trained on a lot of code can make working code from normal language.

But, the biggest gains in how fast people can work come from using LLMs to put together code and make it go faster, not from fully replacing people. Studies show that developers mostly use LLMs to make basic parts of code, fix syntax, rather than whole systems.

Debugging and Refactoring

LLMs are good at seeing patterns in source code, improving their ability to:

- Explain errors

- Understand what went wrong

- Suggest ways to rewrite code

- Update old code

It makes things easier for developers and gives them quicker feedback.

Testing and Quality

Some teams are using LLMs to:

- Make unit tests

- Create unusual situations

- Suggest tests

That change is small but important. Testing, instead of serving as a method for verification, will now be used for brainstorming new things.

Documentation

Writing things down has often been left out because there is not enough time. LLMs change this by making it easy to create:

- API documentation

- README files

- Summaries of how things are set up

- Code comments

This makes it easier to maintain things in big companies.

How Much Faster Can We Really Work?

The perception that LLMs make software development a lot faster than it would be otherwise is exaggerated sometimes. It is worth having a flexible stance towards the proposition.

Where the Gains Are Real

Studies consistently show improvements in:

- How fast developers can work

- How quickly new engineers can get started

- How much time is spent on routine coding

- How fast prototypes can be made

- Less thinking needed to switch between tasks

Software professionals say they are more productive and learn faster when using LLM-assisted tools.

Where to Keep Expectations in Check

Despite the gains, there are still some limits:

- Has trouble understanding what code actually means

- Can only look at a limited amount of code at once

- Can make things up

- Can have security problems

Martin Fowler points out that LLMs are designed to make things up, and this is just how they work, not a bug.

A New Way of Developing Software

The next generation of software is not just made faster, but in a different way.

From Coding to Prompting

Now vibe coding is becoming the new trend. It allows developers to describe their requirement in normal language and then AI and LLMs will make the required application.

This shows a bigger change:

- English is becoming a way to program.

But now, good engineering teams are moving past simple prompt use. They are starting to use more organised AI ways of working.

AI Agents

The field is going toward AI agents that work on their own or with little help. These agents can do things like:

- Plan multi-step coding tasks

- Execute toolchains

- Run tests

- Iterate on failures

- Open pull requests

This model, which many people call agentic coding, is set to change how well engineers work in the next five years.

Humans Still Needed

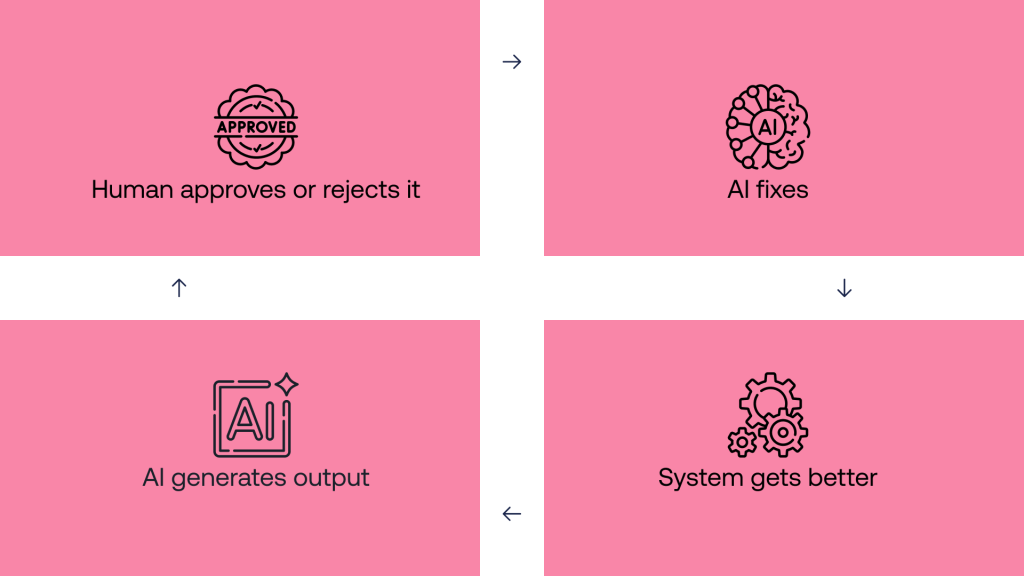

Instead of taking the place of people who write code, the new way seems to be:

AI suggests → human approves → AI fixes → system gets better

Companies that make this a standard process are seeing the best results.

AI-First Software

LLMs are changing not just our tools, but the design of our systems.

Natural Language

Applications can now use natural language:

- AI copilots

- Intelligent search

- Contextual assistants

- Semantic knowledge systems

This changes what people expect from products.

Retrieval-Augmented Generation (RAG)

To avoid made-up information and be more accurate, many systems now use LLMs with information retrieval. This lets models use real data instead of just what they were trained on.

Context-Aware Systems

Software is becoming:

- Context sensitive

- User-adaptive

- Always learning

- Semantically indexed

This is a move away from code to reasoning layers.

Need a Reliable Software Development partner to help grow your Business?

Our Experts Can Help!

Specialised and Domain-Tuned LLMs: The Enterprise Advantage

General models are just the beginning. The trend is now toward LLMs that are made for specific uses.

Why It’s Important

Models made for a certain task are:

- More accurate

- Less likely to make things up

- Better at following rules

- Faster

- Cheaper to use

For software, code-focused models work better than general models.

Enterprise Strategies

Companies are putting money into:

- Fine-tuning with private code

- Secure model hosting

- Prompt libraries

- Internal testing

This is becoming a way to gain an advantage.

Security and Reliability

You cannot talk about LLMs in software development in a good way without talking about risk.

Code Security

Recent studies show that AI-made code often has risks. Research suggests that only about half of the code is secure. In fact, only about 55% of this code may be safe.

Common problems include:

- Insecure dependencies

- Improper validation

- Authentication flaws

- Injection problems

- Outdated cryptographic practices

Made-Up Information

LLMs can make up:

- Library usage

- Configuration

This makes blind trust dangerous.

Governance

Companies are:

- Having humans review code

- Using analysis pipelines

- Validating AI

- Using prompt security

- Deploying private models

Companies that are making LLMs work see them as helpful but risky.

Skills Transformation: New Skills Needed

The rise of LLMs is not getting rid of software engineers, but changing the skills needed.

More Valuable Skills

Engineers who do well in the AI era are good at:

- System design

- Architecture reasoning

- Testing

- Prompt engineering

- Data pipeline design

- Security

- Domain modelling

Less Valuable Skills

Coding is becoming less important. But this does not mean the basics do not matter now. In fact, engineers with a lot of experience are still very important. This is because people who are new to coding often find it hard to say if what large language models make is right or not.

AI-Augmented Engineer

The next generation developer profile looks like:

- Software engineer

- System planner

- AI workflow designer

- Evaluator

This mix of tasks can already be seen in teams that do well.

Economic Impact

From a business standpoint, using large language models in software development makes a strong argument.

Cost and Speed

Companies report improvements in:

- Time to make a prototype

- Feature speed

- Developer onboarding

- Support automation

- Tool efficiency

Even small gains add up.

Competition

AI competitors are forcing companies to change. Those that don’t use LLMs may have:

- Slower releases

- Higher costs

- Weaker products

- Worse developer experience

This is making companies adopt LLMs faster.

Limitations

Despite the progress, there are still some limits.

Context

Even though context windows are getting bigger, they still limit things.

- Full-repository reasoning

- Long-horizon planning

- Deep architectural understanding

Semantic Understanding

Studies show that LLMs are good with syntax. But they are not as strong when it comes to deep reasoning about changing behaviour.

Reliability

Outputs do not always give the same result every time. So, engineering teams need to build systems that can handle this change.

Security

Security has not improved as fast as code generation.

These limits will shape how AI engineering will move forward in the next few years.

What the Future Looks Like

Right now, we can see some new patterns. They are starting to show up as things move forward.

- AI Will Be Everywhere

LLMs will be in:

- IDEs

- CI/CD pipelines

- Stacks

- Products

- Multi-Agent Pipelines

Instead of simple prompts, systems will handle:

- Planning

- Coding

- Testing

- Security review

- Deployment

- Constant Evolution

Software will:

- Self-improve

- Refactor

- Adapt designs

- Personalise

- Private LLMs

Enterprises will want:

- Sovereign AI

- Private tuning

- Secure inference

Strategic Recommendations

To use LLMs, be careful.

- Prioritise use

- Copilots

- Testing

- Documentation

- Tooling

- Build evaluation

- Prompt evaluation

- Output scoring

- Testing

- Red-teaming

- Maintain oversight

- Code review

- Scanning

- Validation

- Invest in upskilling

- Prompting

- System design

- Verification

- Secure usage

Looking for a Partner in Your Software Development Journey?

We Can Help!

Conclusion

LLMs have become key to software. They make routine work faster and enable AI experiences, which is changing the landscape.

But the real story has more to it than what most people say. LLMs help people most in software work when you use them as tools that work with you, not ones that take your place. The groups that will do best in software in the next ten years will be ones that mix smart AI with strong engineering habits.

The future is not human versus AI. It is systems where humans and machines work together.

Shopify

Shopify